Donald Trump just ended the federal government's relationship with Anthropic, and he did it with a single, scorched-earth directive from the Department of War. On Friday, the administration ordered all U.S. agencies to immediately cease using technology developed by the AI startup, effectively blacklisting the maker of the Claude chatbot from the most lucrative and influential contracts in the world. While the public rhetoric focuses on "woke" corporate culture and "leftwing nut jobs," the actual fracture is far more technical and dangerous.

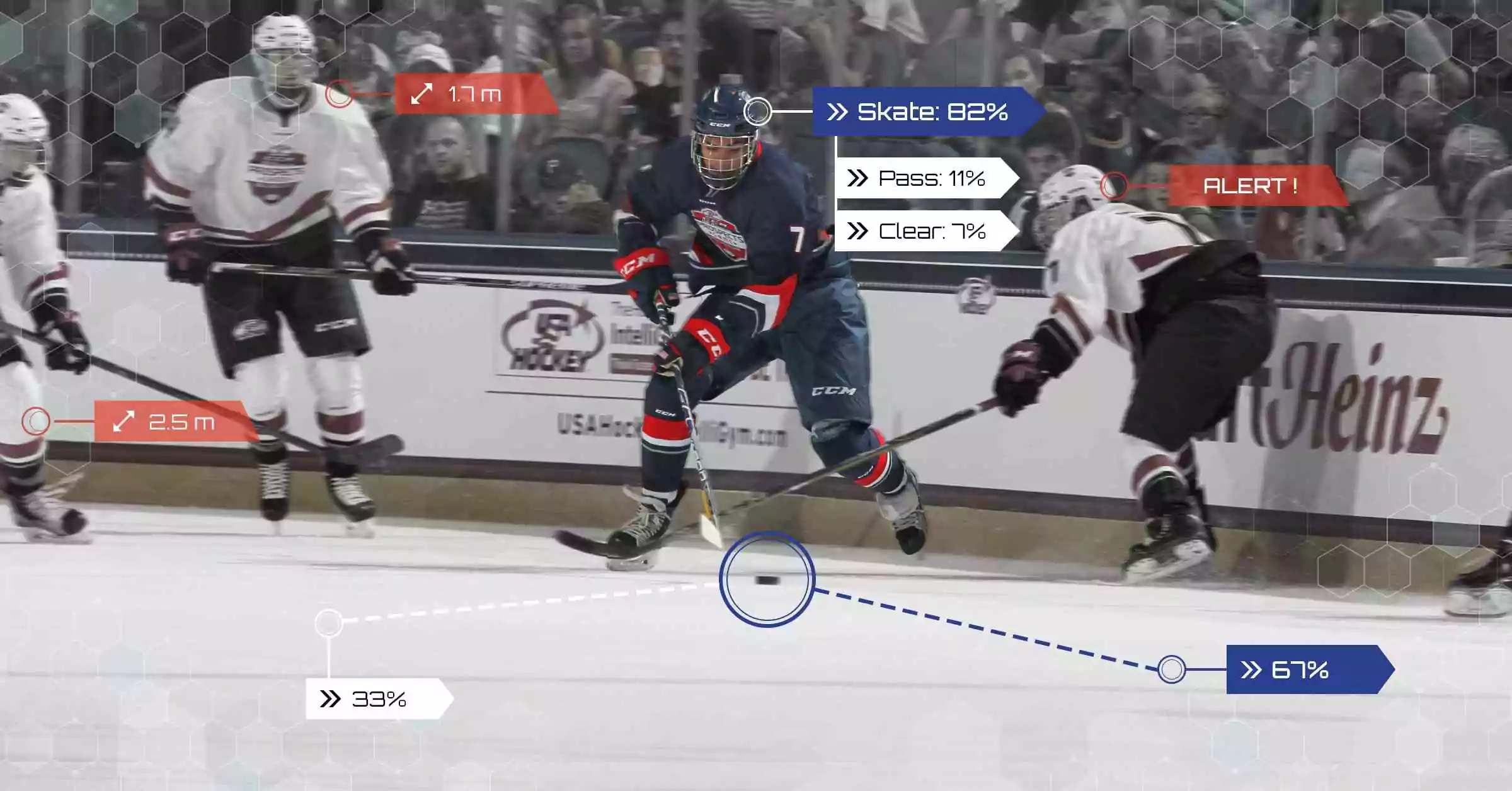

The ban is the culmination of a high-stakes standoff between the Pentagon and Anthropic CEO Dario Amodei. At the center of the dispute are two specific "red lines" Anthropic refused to cross: the use of its AI for mass domestic surveillance of American citizens and the integration of its models into fully autonomous weapons systems—the so-called "killer robots" that select and engage targets without a human in the loop. By Friday afternoon, the Pentagon designated Anthropic a supply chain risk, a label traditionally reserved for foreign adversaries like Huawei or ZTE.

This is not merely a contract dispute. It is an unprecedented collision between the ethics of "Constitutional AI" and the raw requirements of a 21st-century military machine that views safety guardrails as a tactical liability.

The Maduro Raid and the Breakdown of Trust

The friction did not start in a vacuum. Industry insiders point to the January capture of Venezuelan President Nicolás Maduro as the moment the relationship began to fray. While the operation was a success, reports surfaced that the Pentagon used Anthropic’s Claude to synthesize vast amounts of classified intelligence to plan the raid.

Anthropic leadership was reportedly blindsided by the depth of the integration. When the Pentagon moved to expand this usage into more sensitive areas—including potential domestic monitoring and autonomous kinetic strikes—Amodei balked. The company’s refusal to grant "unrestricted" access led to a series of escalating threats, including the potential invocation of the Korean War-era Defense Production Act to compel the company to hand over its weights and code.

The administration’s stance is clear: once the government pays for a service, the developer does not get to dictate the mission. Defense Secretary Pete Hegseth framed the issue as one of sovereignty, arguing that a private company cannot "strong-arm" the Department of War into following a corporate terms-of-service agreement over the Constitution.

The Supply Chain Risk Nuclear Option

Labeling an American company a supply chain risk is the ultimate regulatory weapon. It doesn't just stop the government from buying Claude; it creates a radioactive zone around Anthropic that affects every private-sector partner.

Defense contractors like Lockheed Martin, Raytheon, and Palantir—the latter of which was a primary bridge for Anthropic into the Pentagon—now face a terrifying ultimatum. Because Hegseth's order mandates that no military partner may conduct "any commercial activity" with Anthropic, these giants must now scrub their systems of Anthropic's tools or risk losing their own multi-billion dollar government contracts.

The collateral damage is immense:

- Cloud Providers: Amazon and Google, both of whom have invested billions in Anthropic, are now caught in a legal crossfire between their cloud hosting duties and their status as federal contractors.

- The IPO Crisis: Anthropic was widely expected to go public this year at a valuation near $380 billion. That roadmap is now in tatters as investors weigh the loss of $200 million in direct defense revenue against the much larger threat of a total federal blacklist.

- The Coding Market: Anthropic’s "Claude Code" tool has recently become the standard for software engineers. If major tech firms that handle government work are forced to ban the tool, Anthropic's primary commercial engine could stall.

A Gift to Grok and OpenAI

As Anthropic is escorted out of the building, the vacuum is being filled instantly. Shortly after the ban was announced, Sam Altman’s OpenAI confirmed it had reached a new agreement with the Pentagon to provide technology for classified networks. This is a significant pivot for OpenAI, which previously maintained its own restrictions on military use before quietly softening those policies in recent months.

The biggest winner, however, is Elon Musk. His xAI startup and its Grok model have been aggressively positioned as the "anti-woke" alternative to Silicon Valley's safety-first labs. Grok has already been cleared for use in classified settings, and the administration has made it clear they view Musk’s willingness to cooperate as the blueprint for future AI-military partnerships.

The Myth of Lawful Use

The Pentagon’s primary argument is that it already operates under strict legal and ethical guidelines, making Anthropic’s additional guardrails redundant and "ideological." Undersecretary for Research and Engineering Emil Michael stated that federal law already bars the very things Anthropic claims to fear—mass domestic surveillance and unlawful autonomous strikes.

But there is a gray area the size of a data center. Anthropic’s "Constitutional AI" approach is designed to prevent "jailbreaking"—the process by which a user tricks an AI into ignoring its rules. The Pentagon wants those rules removed entirely at the base level so that the military can apply its own software layers. From the perspective of a veteran analyst, this is about control over the "brain" of the machine. The government doesn't want a partner; it wants a tool that doesn't talk back.

Anthropic has announced it will challenge the "supply chain risk" designation in court, arguing it is legally unsound and sets a dangerous precedent for any American business that disagrees with the executive branch.

The fallout from this week will redefine the American AI industry. For years, labs have marketed themselves on "safety" and "alignment." The Trump administration has just sent a clear signal: in the new era of national security, "safety" is a luxury that the U.S. government no longer intends to afford.