The formation of a specialized artificial intelligence entity through the partnership of Anthropic and major financial institutions represents a shift from generalized LLM deployment to the construction of closed-loop financial intelligence systems. This move indicates that the "off-the-shelf" utility of foundational models has reached a point of diminishing returns for high-stakes capital markets. To extract further value, the industry is moving toward a structural integration of compute power and proprietary financial datasets, effectively creating an "Institutional AI Vertical."

This development is governed by three primary drivers: the high-fidelity requirement of financial data, the mitigation of latency in reasoning-heavy tasks, and the legal necessity for isolated model weights. General-purpose models like Claude or GPT-4, while capable of passing the Bar exam, lack the specific architectural tuning required to navigate the Byzantine compliance and risk-assessment frameworks inherent to Wall Street.

The Architecture of the Institutional Vertical

The partnership is not merely a licensing agreement; it is a structural fusion. General-purpose AI operates on a broad-spectrum probability distribution. In contrast, an institutional vertical requires a narrow-spectrum, high-density focus on financial axioms. This transition is defined by three technical pillars.

1. Data Sovereignity and Weight Isolation

Wall Street firms cannot risk data leakage into a collective training pool. By partnering directly with Anthropic, these firms are likely securing "model weight isolation." This allows the financial entity to fine-tune a base model on proprietary trade data, internal risk memos, and private ledger histories without that information ever leaving a secure, VPC-based (Virtual Private Cloud) environment. The result is a model that understands the specific "private language" of a firm’s internal strategy while utilizing the advanced reasoning capabilities of the underlying Anthropic architecture.

2. The Cost Function of Accuracy

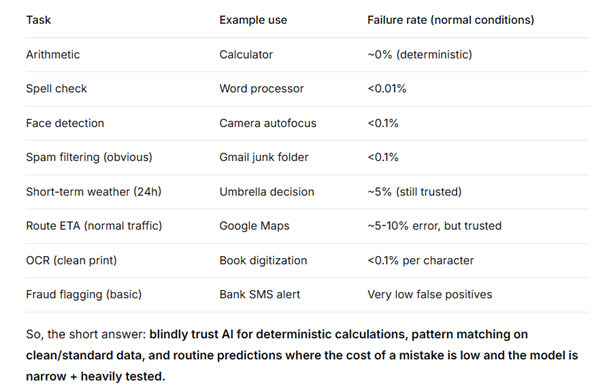

In traditional LLM usage, the cost of a "hallucination" is low—perhaps a typo in a blog post. In institutional finance, the cost is catastrophic. The partnership aims to solve this through Retrieval-Augmented Generation (RAG) and specialized fine-tuning. By anchoring the AI’s output in a verified database of SEC filings, real-time Bloomberg feeds, and internal audits, the system moves from "creative generation" to "probabilistic verification."

3. Computational Moats

The sheer compute cost required to run high-parameter models creates a barrier to entry. By pooling capital, Wall Street giants and Anthropic create a centralized compute cluster dedicated solely to financial processing. This bypasses the queues of public-facing API endpoints, ensuring that time-sensitive market analysis occurs at the speed of institutional demand rather than public availability.

Deconstructing the Incentives

Anthropic’s motivation centers on capital and the acquisition of high-signal data. Training the next generation of models requires billions in liquidity. By aligning with the financial sector, Anthropic secures a non-dilutive or strategic capital influx that allows for R&D expansion without total reliance on traditional venture capital.

For the Wall Street firms, the objective is the automation of cognitive labor. The "Analyst Tier" of investment banking—the thousands of human hours spent scouring balance sheets and modeling scenarios—is the primary target for displacement.

- Scenario Modeling: Instead of an associate spending 40 hours building a DCF (Discounted Cash Flow) model based on varying interest rate hikes, the vertical AI can run 10,000 permutations in seconds, identifying the "fat-tail" risks that human analysts often overlook.

- Compliance Automation: Monitoring transactions for AML (Anti-Money Laundering) or KYC (Know Your Customer) violations currently requires massive human overhead. An AI trained on specific regulatory history can flag anomalies with a precision rate far exceeding static, rule-based software.

- Sentiment Arbitrage: By processing millions of data points across news, social media, and earnings calls, the AI identifies shifts in market sentiment before they are reflected in price action.

The Feedback Loop of Risk and Regulation

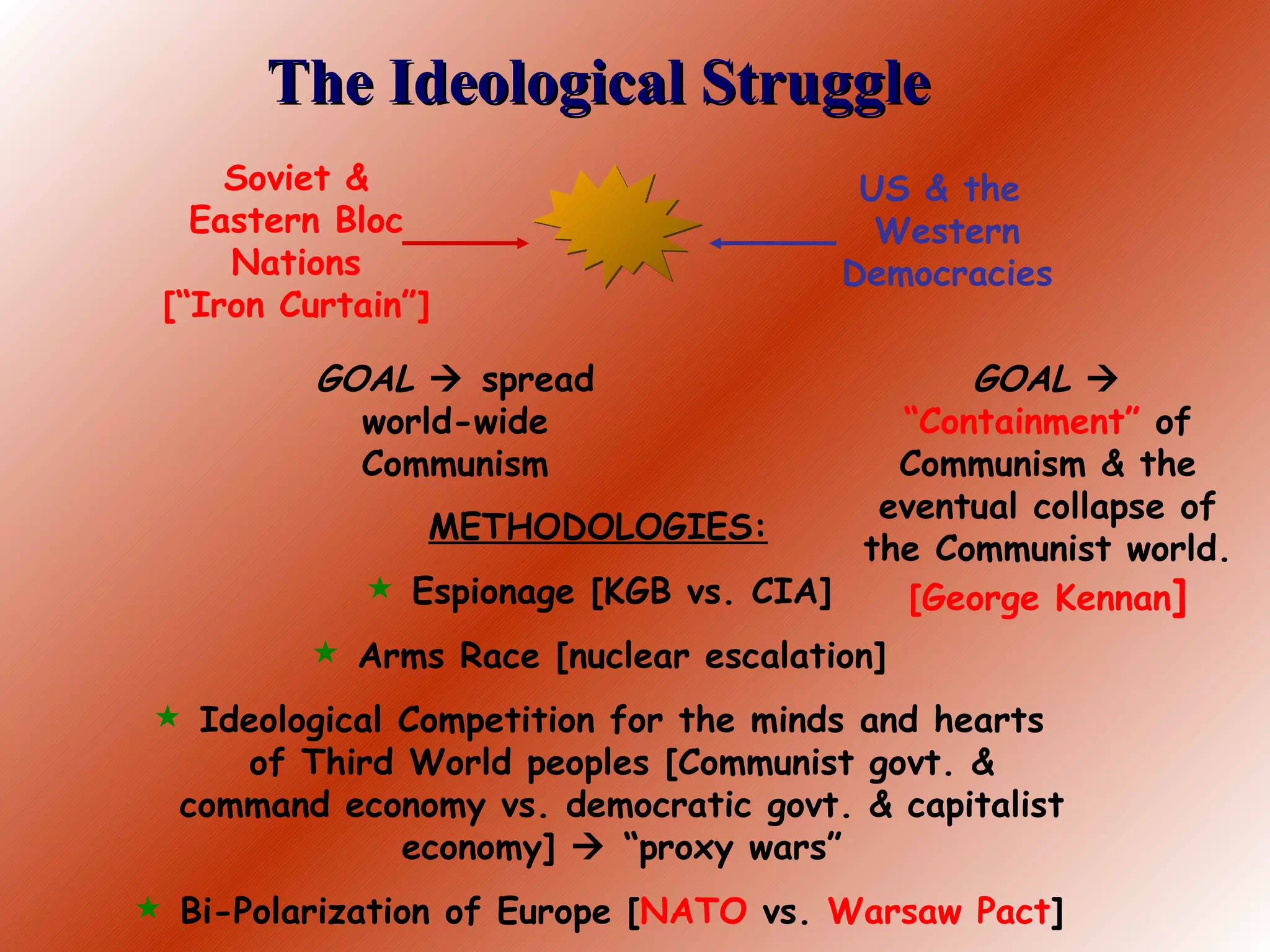

The integration of AI into the core of the global financial system introduces systemic risks that are currently poorly understood. The most significant of these is "Model Homogenization." When multiple major firms utilize the same underlying Anthropic architecture, their risk-assessment outputs may begin to converge.

If ten of the world’s largest banks all use a similar AI to determine when to exit a position, a minor market dip could trigger a simultaneous, automated sell-off. This creates a liquidity vacuum. The speed of AI-driven decision-making outpaces the ability of human regulators to intervene, potentially leading to "flash crashes" driven not by algorithmic high-frequency trading, but by high-level AI reasoning.

Furthermore, the legal framework for "AI Fiduciary Duty" remains nonexistent. If a bank makes a catastrophic investment based on an AI's recommendation, the liability chain is opaque. Is the fault with the model's training data, the prompt engineering of the bank's staff, or the underlying architecture provided by Anthropic?

Scaling the Technical Infrastructure

To support this partnership, the underlying infrastructure must move beyond standard cloud instances. The deployment likely involves dedicated hardware—specifically H100 or B200 GPU clusters—optimized for low-latency inference.

Traditional financial software relies on deterministic logic: If X happens, execute Y. AI introduces a stochastic element. Managing this requires a "Wrapper of Determinism"—a secondary layer of traditional code that sits atop the AI to ensure its outputs fall within pre-defined mathematical bounds.

Component Breakdown of the System:

- Base Model (Anthropic): The "brain" providing linguistic and logical reasoning.

- Vector Database: The "memory" containing the firm’s proprietary data.

- Guardrail Layer: The "editor" ensuring compliance and mathematical accuracy.

- API Interface: The "access point" for human traders and analysts.

This structure allows for "Asymmetric Information Gains." A firm that successfully tunes its model to recognize subtle patterns in Fed transcripts that a general model misses gains a significant edge in the bond markets.

The Displacement of the Junior Analyst

The economic reality of this partnership is the devaluation of entry-level financial expertise. The primary function of a junior analyst is data synthesis and formatting. Since LLMs excel at transforming unstructured data into structured formats, the "bridge" between raw data and senior decision-making is being automated.

This creates a talent pipeline crisis. If the entry-level roles are eliminated, the industry loses its training ground for future senior leaders. Firms will eventually have to decide between short-term efficiency gains and the long-term sustainability of their human capital.

Strategic Implications for the Market

The Anthropic-Wall Street alliance is a signal that the era of "General AI" is bifurcating into "Industrial AI." We are moving toward a world where there is not one "God-model," but a series of highly specialized, heavily guarded corporate intelligences.

The barrier to entry for new hedge funds or boutique banks will rise. Without access to the massive compute and refined models of the "Big Five" banks and their AI partners, smaller players will be forced to compete using inferior, slower, and less accurate tools. This consolidates power within the existing financial elite, underpinned by the technical superiority of their chosen AI partners.

Operational Execution Strategy

For organizations looking to replicate or defend against this shift, the priority must move from "AI experimentation" to "Data Engineering." The effectiveness of any model is capped by the cleanliness and accessibility of the data it queries.

- Standardize the Data Lake: Ensure all internal records are machine-readable and properly tagged for vector search.

- Establish Model Governance: Create a dedicated team to audit AI outputs for "drift"—the tendency of a model to become less accurate over time as market conditions change.

- Human-in-the-Loop Verification: Implement a system where the AI provides the analysis, but the final execution requires a human sign-off based on a "chain-of-thought" log provided by the AI.

The immediate move for institutional players is the acquisition of specialized datasets that are not currently part of the public web. If you own the data that the model needs to be unique, you own the alpha. The competition is no longer about who has the best AI, but who has the most exclusive data to feed it.